When talking about big data the term data lake is often used, the term is originally introduced by James Dixon, Pentaho CTO. The term refers to gathering all available data so it can be used in a big data strategy. By introducing this term James Dixon was correct and part of collecting all data can be part of your big data strategy. However, there is a need to ensure your data lake is not turning into a data swamp. Gartner states some warning on the data lake approach in the “Gartner Says Beware of the Data Lake Fallacy” post on the Gartner website.

In my recent post on the Capgemini website I go into details on Oracle Enterprise Metadata Management and other Oracle products that can be used in combination with the Capgemini Big Data approach to ensure enterprises do get the best possible benefits from implementing a Big Data strategy.

Capgemini promotes a flow which includes Acquisition, Marshalling, Analysis and Action steps all supported by a Master Data Management & Data Governance solution.

The personal view on the IT world of Johan Louwers, specially focusing on Oracle technology, Linux and UNIX technology, programming languages and all kinds of nice and cool things happening in the IT world.

Sunday, December 28, 2014

Saturday, December 27, 2014

Using Oracle Weblogic for building Enterprise Portal functions

Modern enterprises more and more demand from their IT organizations to take the role of a service provider, provide them services with a minimum of lead-time and on a pay-per-use model. Enterprise business users and even the IT organizations do have a growing desire to be able to request services, in the widest sense of the word, directly in a self-service manner. Modern cloud solutions and especially hybrid cloud solutions provide in potential the answer to this question.

Building a portal to help enterprises answer this question can be done by using many techniques and many architectures. In a recent blogpost at the Capgemini website I launched the first step to create a open blueprint for an Enterprise portal. The solution is not opensource however the architecture is open and free for everyone to use. The solution is based upon Oracle Weblogic and other Oracle components. The intention is to create a set of posts over time to expand on this subject.

Building a portal to help enterprises answer this question can be done by using many techniques and many architectures. In a recent blogpost at the Capgemini website I launched the first step to create a open blueprint for an Enterprise portal. The solution is not opensource however the architecture is open and free for everyone to use. The solution is based upon Oracle Weblogic and other Oracle components. The intention is to create a set of posts over time to expand on this subject.

The full article, named "The need for Enterprise Self Service Portals" can be found at the Oracle blog at capgemini.com among other articles I wrote on this website.

Friday, November 07, 2014

Enabling parallel DML on Oracle Exadata

When using Oracle Exadata you can make use of parallelism on a number of levels and within a number of processes. However, when doing an massive data import the level of parallelism might be a bit disappointing at first. Reason for this is that by default not all parallel options are activated. When you do a data import you do want parallel DML (Data Manipulation Language) to be enabled.

You can check the current setting of parallel DML by querying V$SESSION for PDML_STATUS and PDML_ENABLED as an example you can see the query below

SELECT pq_status, pdml_satus, pddl_status, pdml_enabled FROM v$session WHERE sid = SYS_CONTEXT(‘userenv’,’sid’);

this will give you the overview of the current settings applied on your session. If you find that PDML_STATUS = DISABLED and PDML_ENABLED = NO then you can change this by executing an alter session as shown below:

ALTER SESSION ENABLE PARALLEL DML;

when you rerun the above query you should now see that PDML_STATUS = ENABLED and PDML_ENABLED = YES. Now you have set this flags correct you can provide hints to your statements to ensure you will make optimal use of parallelism. Do note that only enabling parallel DML is not solving all your issues, you will still have to look at the code you will be using during your load process of the data into the Exadata.

Wednesday, November 05, 2014

Configure Exadata storage cell mail notification

The Oracle Exadata database machine from Oracle gets a lot its performance not specifically from the compute nodes or the infiniband switches, one of the main game changers are the storage cells used within the Exadata. The primary way, for command line people, to interact with the storage cells if the “cell command line interface” or commonly called the CellCLI.

When you like to ensure you storage cell is informing you via mail notifications you can configure or alter the configuration of the storage cell email notification using the CellCLI command.

When doing changes to the configuration you do want to first know the current configuration of a storage cell. You can execute the following command to request the current configuration

CellCLI> list cell detail

For mail configuration you will primarily want to look at:

To test the current configuration you can have the storage cell send a test mail notification to see if the current configuration works like you expect. The VALIDATE MAIL operation sends a test message using the e-mail attributes configured for the cell. You can use the below CellCLI command for this:

CellCLI> ALTER CELL VALIDATE MAIL

If you want to change something you can use the “ALTER CELL” option from the CellCLI. As an example we use the below command to set all information for a full configuration to ensure that your Exadata storage cell will send you mail notifications. As you can see it will also send SNMP notifications however the full configuration for SNMP is not shown in this example.

CellCLI> ALTER CELL smtpServer='smtp0.seczone.internal.com', -

smtpFromAddr='huntgroup@internal.com', -

smtpFrom='Exadata-Huntgroup', -

notificationMethod='mail,snmp'

When you like to ensure you storage cell is informing you via mail notifications you can configure or alter the configuration of the storage cell email notification using the CellCLI command.

When doing changes to the configuration you do want to first know the current configuration of a storage cell. You can execute the following command to request the current configuration

CellCLI> list cell detail

For mail configuration you will primarily want to look at:

- smtpServer

- smtpFromAddr

- smtpFrom

- smtpToAddr

- notificationMethod

To test the current configuration you can have the storage cell send a test mail notification to see if the current configuration works like you expect. The VALIDATE MAIL operation sends a test message using the e-mail attributes configured for the cell. You can use the below CellCLI command for this:

CellCLI> ALTER CELL VALIDATE MAIL

If you want to change something you can use the “ALTER CELL” option from the CellCLI. As an example we use the below command to set all information for a full configuration to ensure that your Exadata storage cell will send you mail notifications. As you can see it will also send SNMP notifications however the full configuration for SNMP is not shown in this example.

CellCLI> ALTER CELL smtpServer='smtp0.seczone.internal.com', -

smtpFromAddr='huntgroup@internal.com', -

smtpFrom='Exadata-Huntgroup', -

notificationMethod='mail,snmp'

Wednesday, October 22, 2014

Zero Data Loss Recovery Appliance

During Oracle Openworld 2014 Oracle released the Zero Data Loss Recovery Appliance as one of the new Oracle Engineered Systems. The Zero Data Loss Recovery Appliance is an Oracle Engineered System specifically designed to address backup and recovery related challenges in the modern database deployments. It is specifically designed to ensure that a customer can always perform a point in time recovery in an always on economy where downtime result directly in loss of revenue en the loss of data can potentially result in bankrupting the enterprise.

According to the Oracle documentation the key features of the Zero Data Loss Recovery Appliance are:

One of the main benefits of introducing the Zero Data Loss Recovery Appliance is that it provides the perfect leverage to ensure that all backup and recovery strategies are standardized and optimized in an Oracle best practice manner. In most enterprise deployments you still see that backup and recovery strategies differ over a wide Oracle database deployment landscape.

It is not unseen that backup and recovery strategies involves multiple teams and multiple tools and scripts and that multiple ways of implementation are used over time. By not having an optimized and standardized solution for backup and recovery organizations do not have the ability to have an enterprise wide insight in how well the data is protected against data loss and a uniform way of working for recovery is missing. This introduces the risk that data is lost due to missed backups or due to a non compatible way of restoring.

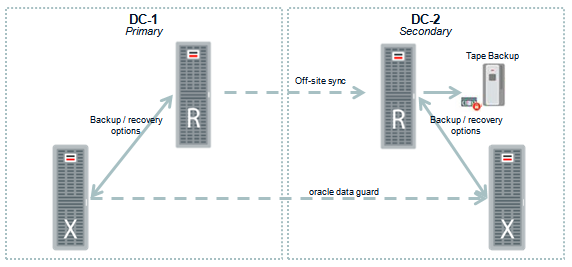

In the below diagram a dual datacenter solution for Zero Data Loss Recovery Appliance is shown in which it is connected to a Oracle Exadata machine. However, all databases regardless of the server platform they are deployed on can be connected to the Zero Data Loss Recovery Appliance.

When operating a large enterprise wide Oracle landscape customers do use Oracle Enterprise Manager for full end-to-end monitoring and management. One of the additional benefits of the Zero Data Loss Recovery Appliance is that it can fully be managed by Oracle Enterprise Manager. This means that the complete management of all components is done via Oracle Enterprise Manager. This in contrast to home grown solutions where customers are in some cases forced to use management tooling for all the different hardware and software components that make the full backup and recovery solution.

For more information about the Zero Data Loss Recovery Appliance please also refer to the below shown presentation.

According to the Oracle documentation the key features of the Zero Data Loss Recovery Appliance are:

- Real-time redo transport

- Secure replication

- Autonomous tape archival

- End-to-end data validation

- Incremental-forever backup strategy

- Space-efficient virtual full backups

- Backup operations offload

- Database-level protection policies

- Database-aware space management

- Cloud-scale architecture

- Unified management and control

- Eliminate Data Loss

- Minimal Impact Backups

- Database Level Recoverability

- Cloud-scale Data Protection

One of the main benefits of introducing the Zero Data Loss Recovery Appliance is that it provides the perfect leverage to ensure that all backup and recovery strategies are standardized and optimized in an Oracle best practice manner. In most enterprise deployments you still see that backup and recovery strategies differ over a wide Oracle database deployment landscape.

It is not unseen that backup and recovery strategies involves multiple teams and multiple tools and scripts and that multiple ways of implementation are used over time. By not having an optimized and standardized solution for backup and recovery organizations do not have the ability to have an enterprise wide insight in how well the data is protected against data loss and a uniform way of working for recovery is missing. This introduces the risk that data is lost due to missed backups or due to a non compatible way of restoring.

In the below diagram a dual datacenter solution for Zero Data Loss Recovery Appliance is shown in which it is connected to a Oracle Exadata machine. However, all databases regardless of the server platform they are deployed on can be connected to the Zero Data Loss Recovery Appliance.

When operating a large enterprise wide Oracle landscape customers do use Oracle Enterprise Manager for full end-to-end monitoring and management. One of the additional benefits of the Zero Data Loss Recovery Appliance is that it can fully be managed by Oracle Enterprise Manager. This means that the complete management of all components is done via Oracle Enterprise Manager. This in contrast to home grown solutions where customers are in some cases forced to use management tooling for all the different hardware and software components that make the full backup and recovery solution.

Wednesday, October 08, 2014

Oracle Enterprise Manager, Metering and Chargeback

When discussing IT with the business side of an enterprise the general opinion is that IT departments should, among other things, be like a utility company. Providing services to the business so they can accelerate in what they do. Not dictating how to do business but providing the services needed, when, where and how the business needs them. This point of view is a valid one, and is fueled by the rise of cloud and the Business Driven IT Management paradigm.

The often forgotten, overlooked or deliberately ignored part of viewing your IT department as a utility company is that the consumer is charged based upon consumption. In many enterprise, large and small the funding of the IT department and the funding of projects is done based a perceptual charge of the budget a department receives in the annual budget. The consolidated value of the IT share of all departments is the budget for the IT department. Even though this seems to be reasonable fare way it is in many cases an unfair distribution of IT costs.

When business departments consider the IT department as a service organization in the way they consider utility companies a service organization the natural evolutionary step is that the IT department will invoice the business departments based upon usage. By transforming the financial funding from the IT department from a budget income to a commercial gained income a number of things will be accomplished:

One of the foundations of this strategy is that the IT department will be able to track the usage of systems. As companies are moving to cloud based solutions, implementing systems in private clouds and public clouds this is providing the ideal moment in time to go to pay-per-use model.

When using Oracle Enterprise Manager as part of your cloud, as for example used in the blog post “The future of the small cloud” you can also use the “metering and chargeback” options that are part of the cloud foundation of Oracle Enterprise Manager. Oracle Enterprise Manager allows you to monitor the usages of assets that you define, for a price per time unit you define. When deploying the metering and chargeback solution within Oracle Enterprise Manager the implementation models to calculate the price per time unit for your internal departments are virtually endless.

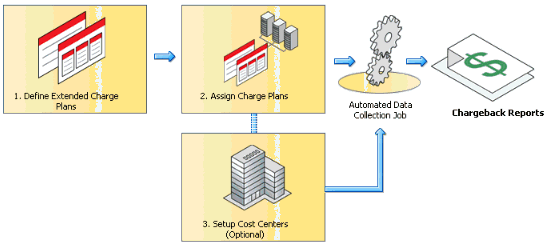

The setup of metering and chargeback focuses around defining charge plans and assigning the charge plans to specific internal customers and cost centers.

The setup of the full end-to-end solution will take time, time to setup the technical side of things as shown as an example screenshot below. However, the majority of the time you will need to spend is to identify and calculate what the exact price for an item should be. This should include all the known costs and hidden costs IT departments have before it is delivered to internal customers. For example, housing, hosting, management, employees, training, licenses, etc etc. This all should be calculated into the price per item per time unit. This is a pure financial calculation that needs to be done.

Even though metering and chargeback is a part of the Oracle Enterprise Manager solution in reality it is in most companies used as a metering and showback solution to inform internal departments about the costs. A next step is for companies currently using metering and showback within Oracle Enterprise Manager is to really bill internal departments based upon consumption. This however is more an internal mind change then a technological implementation.

Implementing “metering and chargeback” is a solution that is needed more and more in modern enterprise. Not purely from a technical point of view but rather more from a business model modernization point of view. By implementing Oracle Enterprise Manager as the central management and monitoring solution and include the “metering and chargeback” options modern enterprises get a huge benefit out of the box and have a direct benefit.

The often forgotten, overlooked or deliberately ignored part of viewing your IT department as a utility company is that the consumer is charged based upon consumption. In many enterprise, large and small the funding of the IT department and the funding of projects is done based a perceptual charge of the budget a department receives in the annual budget. The consolidated value of the IT share of all departments is the budget for the IT department. Even though this seems to be reasonable fare way it is in many cases an unfair distribution of IT costs.

When business departments consider the IT department as a service organization in the way they consider utility companies a service organization the natural evolutionary step is that the IT department will invoice the business departments based upon usage. By transforming the financial funding from the IT department from a budget income to a commercial gained income a number of things will be accomplished:

- Fare distribution of IT costs between business departments

- Forcing IT departments to become more effective

- Forcing IT departments to become more innovative

- Forcing IT departments to become more financially aware

One of the foundations of this strategy is that the IT department will be able to track the usage of systems. As companies are moving to cloud based solutions, implementing systems in private clouds and public clouds this is providing the ideal moment in time to go to pay-per-use model.

When using Oracle Enterprise Manager as part of your cloud, as for example used in the blog post “The future of the small cloud” you can also use the “metering and chargeback” options that are part of the cloud foundation of Oracle Enterprise Manager. Oracle Enterprise Manager allows you to monitor the usages of assets that you define, for a price per time unit you define. When deploying the metering and chargeback solution within Oracle Enterprise Manager the implementation models to calculate the price per time unit for your internal departments are virtually endless.

The setup of metering and chargeback focuses around defining charge plans and assigning the charge plans to specific internal customers and cost centers.

The setup of the full end-to-end solution will take time, time to setup the technical side of things as shown as an example screenshot below. However, the majority of the time you will need to spend is to identify and calculate what the exact price for an item should be. This should include all the known costs and hidden costs IT departments have before it is delivered to internal customers. For example, housing, hosting, management, employees, training, licenses, etc etc. This all should be calculated into the price per item per time unit. This is a pure financial calculation that needs to be done.

Even though metering and chargeback is a part of the Oracle Enterprise Manager solution in reality it is in most companies used as a metering and showback solution to inform internal departments about the costs. A next step is for companies currently using metering and showback within Oracle Enterprise Manager is to really bill internal departments based upon consumption. This however is more an internal mind change then a technological implementation.

Implementing “metering and chargeback” is a solution that is needed more and more in modern enterprise. Not purely from a technical point of view but rather more from a business model modernization point of view. By implementing Oracle Enterprise Manager as the central management and monitoring solution and include the “metering and chargeback” options modern enterprises get a huge benefit out of the box and have a direct benefit.

Manage all databases with one tool

When Oracle acquired Sun a lot of active MySQL users have been wondering in which direction the development of MySQL would go to. Oracle has been developing and expanding the functionality of the MySQL database continuously since the acquisition. The surprising part of the MySQL database has been that the integration with Oracle Enterprise Manager has not been developed. Up until now, during Oracle OpenWorld 2014, Oracle announced the launch of the Oracle Enterprise Manager plugin for the MySQL enterprise edition.

A non-official version of a MySQL plugin has already been around for some time, however, the launch of the official MySQL plugin is significant. Not especially from a new technological point of view but rather from an integration and management point of view the introduction of the MySQL plugin is considered important.

The majority of the enterprises who do host Oracle databases do also host Oracle MySQL databases in their IT infrastructure. The statement that MySQL is only used for small databases and small deployments is incorrect as an example Facebook runs tens of thousands of MySQL servers and a typical instance is 1 to 2 TB. As companies do implement and use Oracle databases and most likely Oracle middleware they do have the need for central management by using Oracle Enterprise Manager 12C. Possibly use it to improve the day to day operations and maintenance of the landscape, possibly to use a cloud based approach to IT management.

Prior to the launch of the Oracle Enterprise Manager MySQL plugin launch companies where forced to use out of band management tooling for day to day operations.

With the introduction of the Oracle Enterprise Manager MySQL plugin you can now incorporate the management of the MySQL databases into your Oracle Enterprise Manager. This will provide you a single point of management and monitoring resulting directly in a more and better managed IT landscape and a quick return on investment due to better management.

On a high level the new Oracle Enterprise Manager MySQL plugin provides the following features:

In general the use of the MySQL plugin for Oracle Enterprise Manager provides you the option to unify the monitoring and management of all your MySQL and Oracle databases in one tool which will result in better management, improved service availability and stability as well as a reduction in cost due to centralizing tooling and mitigating against unneeded downtime.

A non-official version of a MySQL plugin has already been around for some time, however, the launch of the official MySQL plugin is significant. Not especially from a new technological point of view but rather from an integration and management point of view the introduction of the MySQL plugin is considered important.

The majority of the enterprises who do host Oracle databases do also host Oracle MySQL databases in their IT infrastructure. The statement that MySQL is only used for small databases and small deployments is incorrect as an example Facebook runs tens of thousands of MySQL servers and a typical instance is 1 to 2 TB. As companies do implement and use Oracle databases and most likely Oracle middleware they do have the need for central management by using Oracle Enterprise Manager 12C. Possibly use it to improve the day to day operations and maintenance of the landscape, possibly to use a cloud based approach to IT management.

Prior to the launch of the Oracle Enterprise Manager MySQL plugin launch companies where forced to use out of band management tooling for day to day operations.

With the introduction of the Oracle Enterprise Manager MySQL plugin you can now incorporate the management of the MySQL databases into your Oracle Enterprise Manager. This will provide you a single point of management and monitoring resulting directly in a more and better managed IT landscape and a quick return on investment due to better management.

On a high level the new Oracle Enterprise Manager MySQL plugin provides the following features:

- MySQL Performance Monitoring

- MySQL Availability Monitoring

- MySQL Metric Collection

- MySQL Alerts and Notifications

- MySQL Configuration Management

- MySQL Reports

- MySQL Remote Monitoring

In general the use of the MySQL plugin for Oracle Enterprise Manager provides you the option to unify the monitoring and management of all your MySQL and Oracle databases in one tool which will result in better management, improved service availability and stability as well as a reduction in cost due to centralizing tooling and mitigating against unneeded downtime.

Sunday, September 28, 2014

The future of the small cloud

When talking about cloud, immediately the thoughts of Amazon, Azure and Oracle Cloud comes to mind by a lot of people. When talking about private cloud the general idea comes to mind that this is a model which is valuable for large customers running hundreds or thousands of environments and which will require a large investment in hardware, software, networking and human resources to deploy a private cloud solution.

Even though the public cloud provides a lot of benefits and relieves companies from CAPEX costs in some cases it is beneficial to create a private cloud. This is not only the case for large enterprises running thousands of services, this is also the case for small companies. Some of the reasons that a private cloud is more applicable then using a public cloud can be for example:

• Legal requirements

• Compliancy rules and regulations

• Confidentiality of data and/or source code

• Specific needs around control beyond the possibilities of public cloud

• Specific needs around performance beyond the possibilities of public cloud

• Specific architectural and technical requirements beyond the possibilities of public cloud

There are more specific reasons that a private cloud, or hybrid cloud, for small companies can be more beneficial than a public cloud and can be determined on a case by case base. Capgemini provides roadmap architecture services to support customers in determining the best solution for a specific case which can be public cloud, private cloud or a mix of both in the form of a hybrid cloud. This is next to more traditional solutions that are still very valid in many cases for customers.

One of the main misconceptions around private cloud is that it is considered to be only valid for large deployments and large enterprises. The general opinion is that there is the need for a high initial investment in hardware, software and knowledge. As stated this is a misconception. By using both Oracle hardware and software there is an option to build a relative low cost private cloud which can be managed for a large part from a central graphical user interface in the form of Oracle Enterprise Manager.

A private cloud can be started by a simple deployment of two or more Sun X4-* servers and using Oracle VM as a hypervisor for the virtualization. This can be the starting point for a simple self-service enabled private cloud where departments and developers can provision systems in an infrastructure as a service manner or provision databases and middleware in the same fashion.

By using the above setup in combination with Oracle Enterprise Manager you can have a simple private cloud up and running in a matter of days. This will enable your business or your local development teams to make use of a private in-house cloud where they can make use of self service portals to deploy new virtual machines or databases / applications in a matter of minutes based upon templates provided by Oracle.

Even though the public cloud provides a lot of benefits and relieves companies from CAPEX costs in some cases it is beneficial to create a private cloud. This is not only the case for large enterprises running thousands of services, this is also the case for small companies. Some of the reasons that a private cloud is more applicable then using a public cloud can be for example:

• Legal requirements

• Compliancy rules and regulations

• Confidentiality of data and/or source code

• Specific needs around control beyond the possibilities of public cloud

• Specific needs around performance beyond the possibilities of public cloud

• Specific architectural and technical requirements beyond the possibilities of public cloud

There are more specific reasons that a private cloud, or hybrid cloud, for small companies can be more beneficial than a public cloud and can be determined on a case by case base. Capgemini provides roadmap architecture services to support customers in determining the best solution for a specific case which can be public cloud, private cloud or a mix of both in the form of a hybrid cloud. This is next to more traditional solutions that are still very valid in many cases for customers.

One of the main misconceptions around private cloud is that it is considered to be only valid for large deployments and large enterprises. The general opinion is that there is the need for a high initial investment in hardware, software and knowledge. As stated this is a misconception. By using both Oracle hardware and software there is an option to build a relative low cost private cloud which can be managed for a large part from a central graphical user interface in the form of Oracle Enterprise Manager.

A private cloud can be started by a simple deployment of two or more Sun X4-* servers and using Oracle VM as a hypervisor for the virtualization. This can be the starting point for a simple self-service enabled private cloud where departments and developers can provision systems in an infrastructure as a service manner or provision databases and middleware in the same fashion.

By using the above setup in combination with Oracle Enterprise Manager you can have a simple private cloud up and running in a matter of days. This will enable your business or your local development teams to make use of a private in-house cloud where they can make use of self service portals to deploy new virtual machines or databases / applications in a matter of minutes based upon templates provided by Oracle.

Friday, August 29, 2014

Oracle Database FIPS 140-2 security

With the release of Oracle database 12.1.0.2 Oracle has introduced a new parameter in the database in relation to security. The new parameter DBFIPS_140 is used to ensure your database is secured according to the FIPS 140-2 standards level 2. FIPS 140-2 stands for Federal Information Processing Standard and dictates how data should be encrypted in rest and during transmission.

The fact that Oracle now has the option to activate a parameter in the database which will control that your data will be secured in accordance to FIPS 140-2 level 2 is a huge benefit when deploying databases in government environments demanding FIPS compliancy, however, it can also be used for non government systems as it will show a level of security implemented in your system.

To ensure your entire solution is FIPS compliant in an end-to-end fashion will take more then only activate the DBFIPS_140 parameter in your database however from a database component point of view in the overall solution it is a good thing.

The current DBFIPS_140 parameter is designed to be compliant with FIPS 140-2 level 2. The FIPS 140 standard consist out of 4 levels from which Oracle is currently covering level 2. The overall standard has the following descriptions on the levels within FIPS 140-2:

The FIPS 140-2 setting uses the cryptographic libraries which are included in the Oracle database to ensure encryption of the data and are designed to meet the federal requirements for data encryption during rest and during transmission. For this Oracle uses a combination of 3 solutions; a Secure Socket Layer implementation (SSL), Transparent Data Encryption (TDE) and the DBMS_CRYPTO package.

To active the FIPS 140 setting you have to apply the below command and restart your database to ensure the change has taken effect;

ALTER SYSTEM SET DBFIPS_140 = TRUE;

When designing a secure environment for you customer, government or non-government it is however of importance to understand that security takes more then only activating DBFIPS_140. Even if you only take the database into account a real secure Oracle database implementation will take a lot more and will include full separate security architecture. The Oracle Advanced Security portfolio for databases contains a lot of products, which are pure for the database.

Implementing this, and taking into account you will still need additional security around networking, operating systems, physical location security, client system security and others will take more time then an average secured system. Only securing the database will provide you a secure solution for your database however to ensure true security you will have to apply the same level of masseurs on all components of your secured landscape.

The fact that Oracle now has the option to activate a parameter in the database which will control that your data will be secured in accordance to FIPS 140-2 level 2 is a huge benefit when deploying databases in government environments demanding FIPS compliancy, however, it can also be used for non government systems as it will show a level of security implemented in your system.

To ensure your entire solution is FIPS compliant in an end-to-end fashion will take more then only activate the DBFIPS_140 parameter in your database however from a database component point of view in the overall solution it is a good thing.

The current DBFIPS_140 parameter is designed to be compliant with FIPS 140-2 level 2. The FIPS 140 standard consist out of 4 levels from which Oracle is currently covering level 2. The overall standard has the following descriptions on the levels within FIPS 140-2:

- FIPS 140-2 Level 1 the lowest, imposes very limited requirements; loosely, all components must be "production-grade" and various egregious kinds of insecurity must be absent.

- FIPS 140-2 Level 2 adds requirements for physical tamper-evidence and role-based authentication.

- FIPS 140-2 Level 3 adds requirements for physical tamper-resistance (making it difficult for attackers to gain access to sensitive information contained in the module) and identity-based authentication, and for a physical or logical separation between the interfaces by which "critical security parameters" enter and leave the module, and its other interfaces.

- FIPS 140-2 Level 4 makes the physical security requirements more stringent, and requires robustness against environmental attacks

The FIPS 140-2 setting uses the cryptographic libraries which are included in the Oracle database to ensure encryption of the data and are designed to meet the federal requirements for data encryption during rest and during transmission. For this Oracle uses a combination of 3 solutions; a Secure Socket Layer implementation (SSL), Transparent Data Encryption (TDE) and the DBMS_CRYPTO package.

To active the FIPS 140 setting you have to apply the below command and restart your database to ensure the change has taken effect;

ALTER SYSTEM SET DBFIPS_140 = TRUE;

When designing a secure environment for you customer, government or non-government it is however of importance to understand that security takes more then only activating DBFIPS_140. Even if you only take the database into account a real secure Oracle database implementation will take a lot more and will include full separate security architecture. The Oracle Advanced Security portfolio for databases contains a lot of products, which are pure for the database.

Implementing this, and taking into account you will still need additional security around networking, operating systems, physical location security, client system security and others will take more time then an average secured system. Only securing the database will provide you a secure solution for your database however to ensure true security you will have to apply the same level of masseurs on all components of your secured landscape.

Tuesday, August 05, 2014

Oracle virtualbox Disk UUID issue resolved

Oracle Virtualbox is a desktop virtualization technology used by many developers and system administrators to be able to quickly run a virtual operating system on top of their workstation OS. It is freely available from Oracle and has a widespread adoption. Even though it is a robust solution for running virtual machines on your workstation it can in some situations have some issues. Especially when you change the location of your virtual disks there might be a strange error in the Virtualbox gui.

Due to a running out of diskspace issue I was forced to move some of the virtual disks attached to my virtual machines to another disk on my workstation. Oracle virtualbox allows you to attach (or de-attach) disks to a virtual machine via the GUI. However, if you move a virtual disk to another location and try to re-attach it to a virtual machine the GUI is giving a warning like the one below:

The message reads that virtuabox cannot register the hard disk with a specific UUID because a hard disk with the same UUID is already know. This is due to the fact that virtualbox keep track of virtual disk files with a combination of UUID and location. As you move the file it is seen as a different virtual disk however with the same UUID. Solution for this is to change the UUID in the file so Virtualbox will see it as a new disk and you will be able to attach it to your virtual machine again. On windows (host) systems this can be resolved by executing the below command:

After executing this command you will see that you are able to attach the disk without any issue and can use it again while running at its new location.

Due to a running out of diskspace issue I was forced to move some of the virtual disks attached to my virtual machines to another disk on my workstation. Oracle virtualbox allows you to attach (or de-attach) disks to a virtual machine via the GUI. However, if you move a virtual disk to another location and try to re-attach it to a virtual machine the GUI is giving a warning like the one below:

The message reads that virtuabox cannot register the hard disk with a specific UUID because a hard disk with the same UUID is already know. This is due to the fact that virtualbox keep track of virtual disk files with a combination of UUID and location. As you move the file it is seen as a different virtual disk however with the same UUID. Solution for this is to change the UUID in the file so Virtualbox will see it as a new disk and you will be able to attach it to your virtual machine again. On windows (host) systems this can be resolved by executing the below command:

After executing this command you will see that you are able to attach the disk without any issue and can use it again while running at its new location.

Oracle Big Data trends 2014

Oracle has released an insight into the top trends for 2014 in relation to Big Data and Analytics. As can be seen from the 10 points that Oracle sees as trends for 2014 there is a clear focus on big data, predictive analytics and integrating this into existing solutions and processes within an enterprise.

1) Mobile Analytics are on the rise; plans for mobile BI initiatives will double this year.

2) ½ of organizations will move analytics to the cloud for easier reporting

3) ¼ of organizations will unite Hadoop-based data reservoirs with data warehouses as a cost-effective method for long-term storage and in place analysis

4) Organizations will double the number of people with advanced skills in Hadoop and predictive analysis in the coming year

5) 33% of human capital management professionals will use big data discovery tools to explore data from performance reviews, internal surveys, professional profiles and insider workplace websites such as Glassdoor.

6) 40% will prioritize predictive analysis to gain insight into big data strategies

7) 52% will use predictive analytics to gain insight into old business processes

8) 59% will use decision optimization technologies to provide a more personalized and more effective experience for customer interaction.

9) 44% of decision makers will embrace packaged analytics to integrate with existing ERP systems.

10) Organizations still feel their analytics skills are on a beginner level. To keep up they will focus on developing analytic competences.

1) Mobile Analytics are on the rise; plans for mobile BI initiatives will double this year.

2) ½ of organizations will move analytics to the cloud for easier reporting

3) ¼ of organizations will unite Hadoop-based data reservoirs with data warehouses as a cost-effective method for long-term storage and in place analysis

4) Organizations will double the number of people with advanced skills in Hadoop and predictive analysis in the coming year

5) 33% of human capital management professionals will use big data discovery tools to explore data from performance reviews, internal surveys, professional profiles and insider workplace websites such as Glassdoor.

6) 40% will prioritize predictive analysis to gain insight into big data strategies

7) 52% will use predictive analytics to gain insight into old business processes

8) 59% will use decision optimization technologies to provide a more personalized and more effective experience for customer interaction.

9) 44% of decision makers will embrace packaged analytics to integrate with existing ERP systems.

10) Organizations still feel their analytics skills are on a beginner level. To keep up they will focus on developing analytic competences.

Saturday, July 26, 2014

Query Big Data with SQL

Data management used to be “easy” within enterprises, in most common cases data lived was stored in files on a file system or it was stored in a relational database. With some small exceptions this was where you where able to find data. With the explosion of data we see today and with the innovation around the question how to handle the data explosion we see a lot more options coming into play. The rise of NoSQL databases and the rise of HDFS based Hadoop solutions places data in a lot more places then only the two mentioned.

Having the option to store data where it is most likely adding the most value to the company is from an architectural point of view a great addition. By having the option for example to not choice for a relational database however store data in a NoSQL database or HDFS file system is giving architects a lot more flexibility when creating an enterprise wide strategy. However, it is also causing a new problem, when you try to combine data this might become much harder. When you store all your data in a relations database you can easily query all the data with a single SQL statement. When parts of your data reside in a relational database, parts in a NoSQL database and parts in a HDFS cluster the answer to this question might become a bit harder and a lot of additional coding might be required to get a single overview.

Oracle announced “Oracle Big Data SQL” which is an “addition” to the SQL language which enables you to query data not only in the Oracle Database however also query, in the same select statement, data that resides in other places. Other places being Hadoop HDFS clusters and NoSQL databases. By extending the data dictionary of the Oracle database and allowing it to store information of data that is stored in NoSQL or Hadoop HDFS clusters Oracle can now make use of those sources in combination with the data stored in the database itself.

The Oracle Big Data SQL way of working will allow you to create single queries in your familiar SQL language however execute them on other platforms. The Oracle Big Data SQL implementation will take care of the translation to other languages while developers can stick to SQL as they are used to.

Oracle Big Data SQL is available with Oracle Database 12C in combination with theOracle Exadata Engineered system and the Oracle Big Data appliance engineered system. The use of Oracle Engineered systems make sense as you are able to use infiniband connections between the two systems to eliminate the network bottleneck. Also the entire design of pushing parts of a query to another system is in line with how Exadata works. In the Exadata machine the workload (or number crunching) is done for a large part not on the compute nodes but rather on the storage nodes. This ensures that more CPU cycles are available for other tasks and sorting, filtering and other things are done where they are supposed to be done, on the storage layer.

A similar strategy is what you see in the implementation of Oracle Big Data SQL. When a query (or part of a query) is pushed to the Oracle Big Data Appliance only the answer is send back and not a full set of data. This means that (again) the CPU’s of the database instance are not loaded with tasks that can be done somewhere else (on the Big Data Appliance).

A similar strategy is what you see in the implementation of Oracle Big Data SQL. When a query (or part of a query) is pushed to the Oracle Big Data Appliance only the answer is send back and not a full set of data. This means that (again) the CPU’s of the database instance are not loaded with tasks that can be done somewhere else (on the Big Data Appliance).

The option to use Oracle Big Data SQL has a number of advantages to our customers, both on a technical as well as architectural and integration level. We can now lower the load on database instance CPU’s and are not forced to manual create connections between relations databases and NoSQL and Hadoop HDFS solutions. While on the other hand helps customers get rapid return on investment. Some Capgemini statements can be found on the Oracle website in a post by Peter Jeffcock and Brad Tewksbury from Oracle after the Oracle Key partner briefing on Oracle Big Data SQL.

Sunday, July 13, 2014

Oracle will take three years to become a cloud company

Traditional software vendors who have been relying on a steady income of license revenue are forced to ether change the standing business model radically or been overrun by new and upcoming companies. The change that cloud computing is bringing is by some industry analysts compared to the introduction of the Internet. The introduction and the rapid growth of the internet did start a complete new sub-industry in the IT industry and has created the IT-bubble which made numerous companies bankrupt when it did burst.

As the current standing companies see the thread and possibilities of cloud computing rising they are trying to change direction to ensure survival. Oracle, being one of the biggest enterprise oriented software vendors at this moment is currently changing direction and stepping into cloud computing full swing. This by extending on the more traditional way of doing business by providing tools to create private cloud solutions for customer and also by becoming the new cloud vendor in the form of IaaS, SaaS, DBaaS and some other forms of cloud computing.

According to a recent article from Investor Business Daily the transition for Oracle will take around three years to complete. Based upon Susan Anthony, an analyst for Mirabaud Securities, it will take around five years until cloud based solutions will contribute significantly more then the current license sales model;

"As the shift takes place, the software vendors' new license revenues will ... be replaced to some extent by the cloud-subscription model, which within three years will match the revenues that would have been generated by the equivalent perpetual license and, over five years, contribute significantly more"

The key to success for Oracle and for other companies will be to attract different minded people then they are currently have. The traditional way of thinking is so deeply embedded in the companies that a more cloud minded generation will be needed to help turn the cloud transformation for traditional companies into a success. Michael Turits, an analyst for Raymond James & Associates states the following on this critical success factor:

"It takes a lot to turn the battleship and transition a legacy (software) company into a cloud company, We believe they are hiring people to focus on cloud sales and that the incentive structure is being altered to speed the transition."

Analysts are united in the believe that this is a needed transition for Oracle to survive however that it will, on the short term will hurt the revenue stream of the company and by doing so it will negatively influence the stock price for the upcoming years. Rick Sherlund, a Nomura Securities analyst, wrote in a June 25 research note:

"Oracle, like other traditional, on-premise software vendors, will be financially disadvantaged over the short term as its upfront on-premise license revenues are cannibalized by the recurring cloud-based revenues, therefore, we model expected license revenues to be flat to down for the next two years (during) the transition."

Currently we can see the transition taking place, June 25, 2014, Mark Hurd presented the Oracle Cloud Strategy for the upcoming years. Not only the expansion in global datacenters for hosting the new business model however also the growth predictions for the upcoming years. As we look at the growth in datacenters you will be able to see that Oracle is serious about the cloud strategy and transformation.

The full presentation deck can be found embedded below:

As the current standing companies see the thread and possibilities of cloud computing rising they are trying to change direction to ensure survival. Oracle, being one of the biggest enterprise oriented software vendors at this moment is currently changing direction and stepping into cloud computing full swing. This by extending on the more traditional way of doing business by providing tools to create private cloud solutions for customer and also by becoming the new cloud vendor in the form of IaaS, SaaS, DBaaS and some other forms of cloud computing.

According to a recent article from Investor Business Daily the transition for Oracle will take around three years to complete. Based upon Susan Anthony, an analyst for Mirabaud Securities, it will take around five years until cloud based solutions will contribute significantly more then the current license sales model;

"As the shift takes place, the software vendors' new license revenues will ... be replaced to some extent by the cloud-subscription model, which within three years will match the revenues that would have been generated by the equivalent perpetual license and, over five years, contribute significantly more"

The key to success for Oracle and for other companies will be to attract different minded people then they are currently have. The traditional way of thinking is so deeply embedded in the companies that a more cloud minded generation will be needed to help turn the cloud transformation for traditional companies into a success. Michael Turits, an analyst for Raymond James & Associates states the following on this critical success factor:

"It takes a lot to turn the battleship and transition a legacy (software) company into a cloud company, We believe they are hiring people to focus on cloud sales and that the incentive structure is being altered to speed the transition."

Analysts are united in the believe that this is a needed transition for Oracle to survive however that it will, on the short term will hurt the revenue stream of the company and by doing so it will negatively influence the stock price for the upcoming years. Rick Sherlund, a Nomura Securities analyst, wrote in a June 25 research note:

"Oracle, like other traditional, on-premise software vendors, will be financially disadvantaged over the short term as its upfront on-premise license revenues are cannibalized by the recurring cloud-based revenues, therefore, we model expected license revenues to be flat to down for the next two years (during) the transition."

Currently we can see the transition taking place, June 25, 2014, Mark Hurd presented the Oracle Cloud Strategy for the upcoming years. Not only the expansion in global datacenters for hosting the new business model however also the growth predictions for the upcoming years. As we look at the growth in datacenters you will be able to see that Oracle is serious about the cloud strategy and transformation.

The full presentation deck can be found embedded below:

Wednesday, July 09, 2014

Room rates based upon big data

Hotels traditionally do have flexible rates for their rooms, the never ending challenges for hotels however is, when to raise your rates and when to drop the rates. A common seen solution is to raise prices as closer to the date the room will be occupied and one or two days in advance drop the price if it is not sold yet. On average this is working quite well however it is a suboptimal and unsophisticated way of introducing dynamic pricing for hotel rooms. The real value of a room is depending on large set of parameters that are constantly changing.

For example the weather, vacations, conventions in town or airlines that are on strike will all influence the demand for rooms. If you are able to react to changing variables directly you will be able to make the average hotel room more profitable. Keeping track of all kinds of information from a large number of sources and benchmark this against results from the past is a extremely difficult task to manually or even to code a customer application for. Duetto recently raised $21M in venture capital from Accel Partners to expand their SaaS solution which is providing exactly this service to hotels.

Duetto provides a SaaS solution from the cloud that will keep track of all potential interesting data sources and mines this data to dynamically change the room rates for a hotel based upon the results.

By mining and processing big data Duetto is able to advise hotels on when to drop or raise the price. This can change in a moment notice and without the hotel employees to keep track of all things that are happening in the area. Duetto provides all hotels an easy solution for implementing intelligent dynamic pricing. The big advantage Duetto is offering is that it is a SaaS solution that is ready to run from day one instead of building a home grown solution which might take a long period to develop, test and benchmark before it will become usable.

It is not unlikely that Duetto will be expanding their services in the near future to other industries. The demand for dynamic pricing based upon big data will only be a growing market in the upcoming years and Duetto will be in an ideal position to expand their services to new growth markets.

For example the weather, vacations, conventions in town or airlines that are on strike will all influence the demand for rooms. If you are able to react to changing variables directly you will be able to make the average hotel room more profitable. Keeping track of all kinds of information from a large number of sources and benchmark this against results from the past is a extremely difficult task to manually or even to code a customer application for. Duetto recently raised $21M in venture capital from Accel Partners to expand their SaaS solution which is providing exactly this service to hotels.

Duetto provides a SaaS solution from the cloud that will keep track of all potential interesting data sources and mines this data to dynamically change the room rates for a hotel based upon the results.

By mining and processing big data Duetto is able to advise hotels on when to drop or raise the price. This can change in a moment notice and without the hotel employees to keep track of all things that are happening in the area. Duetto provides all hotels an easy solution for implementing intelligent dynamic pricing. The big advantage Duetto is offering is that it is a SaaS solution that is ready to run from day one instead of building a home grown solution which might take a long period to develop, test and benchmark before it will become usable.

It is not unlikely that Duetto will be expanding their services in the near future to other industries. The demand for dynamic pricing based upon big data will only be a growing market in the upcoming years and Duetto will be in an ideal position to expand their services to new growth markets.

Monday, July 07, 2014

Using R and Oracle Exadata

Currently the R language is the choice for statistical computing and is widely adopted in the commercial and scientific community performing statistical computing. R is a free statistical language developed in 1993 and released under the GNU General public license. Traditionally R has been used to do statistical computations on large sets of data and due to this it is seeing an adoption in the Big Data ecosystem even though it is not as widely adopted as for example the MapReduce programming paradigm which has it high adoption rate thanks to Apache Hadoop.

Even though, R is claiming its place in the Big Data ecosystem and is seeing enterprise grade adoption. Due to this there are a number of enterprise ready R implementations available. Oracle is one of the companies who have developed enterprise ready R named “Oracle R Enterprise”. Interesting about the Oracle R Enterprise distribution is that it will become a part of the database server itself.

In general the idea is that on the database server multiple R engines will be spawned and will work in parallel to execute the computations needed. Depending on your programming the results can be stored in the database, can be given to a workstation or can be sending to a Hadoop cluster to execute additional computations. As an addition to this, due to the Hadoop connector R inside the database server can potentially also make use of data inside the Hadoop cluster if needed. From a high level perspective this will look like the diagram below.

Oracle is providing engineered systems for both Big Data, Analytics and the Oracle database. This means we can also deploy the above outlined scenario on an engineered systems deployment. In the below diagram we will use a pure Oracle Engineered Systems solution however this is not required, you can use Oracle engineered systems where you deem them needed and leave them out where you do not need them. However, there are large benefits when deploying a full engineered system solution.

Even though, R is claiming its place in the Big Data ecosystem and is seeing enterprise grade adoption. Due to this there are a number of enterprise ready R implementations available. Oracle is one of the companies who have developed enterprise ready R named “Oracle R Enterprise”. Interesting about the Oracle R Enterprise distribution is that it will become a part of the database server itself.

In general the idea is that on the database server multiple R engines will be spawned and will work in parallel to execute the computations needed. Depending on your programming the results can be stored in the database, can be given to a workstation or can be sending to a Hadoop cluster to execute additional computations. As an addition to this, due to the Hadoop connector R inside the database server can potentially also make use of data inside the Hadoop cluster if needed. From a high level perspective this will look like the diagram below.

In the above example diagram the deployment is using Oracle Exadata, Oracle Big Data Appliances and the Oracle Exalytics machine. By combining those you will benefit from both R and from the capabilities of the Oracle Engineered systems. When you are in need of deploying R for analytical computing and you are also using Oracle databases and applications on a wider scale in your IT landscape it will be extreemly beneficial to give Oracle Enterprise R a consideration and depending on the size of your data to combine this with Oracle Engineered systems.

Monday, June 30, 2014

Puppet and Oracle Enterprise Manager

Enterprises are using virtualization already for years as part of their datacenter strategy. Since recent you see that virtualization solutions are turning into private cloud solutions which enables business users even more to quickly request new systems and make full use of the benefits of cloud within the confinement of their own datacenter. A number of vendors provide both the software and the hardware to kickstart the deployment of a private cloud.

Oracle provides an engineered system in the form of the Oracle Virtual Compute Appliance which is a combination of pre-installed hardware which enables customers to get up and running in days instead of months. However, a similar solution can also be created “manually”. All software components are available separately from the OVCA. Central within the private cloud strategy from Oracle is Oracle Enterprise Manager 12C in combination with Oracle VM and Oracle Linux.

In the below diagram you can see a typical deployment of a private cloud solution based upon Oracle software.

Oracle provides an engineered system in the form of the Oracle Virtual Compute Appliance which is a combination of pre-installed hardware which enables customers to get up and running in days instead of months. However, a similar solution can also be created “manually”. All software components are available separately from the OVCA. Central within the private cloud strategy from Oracle is Oracle Enterprise Manager 12C in combination with Oracle VM and Oracle Linux.

In the below diagram you can see a typical deployment of a private cloud solution based upon Oracle software.

As you can see in the above diagram Oracle Enterprise Manager plays a vital role in the Oracle private cloud architecture. Oracle positions the Oracle Enterprise Manager solution as the central monitoring and provisioning tooling for both the infrastructure components as well as application database components. Next to this Oracle Enterprise Manager is used for patching both operating system components as well as application and database components. In general Oracle positions the Oracle Enterprise Manager the central solution for your entire private cloud solution. Oracle Enterprise Manager ties in with Oracle VM Manager and enables customers to request / provision new virtual servers from an administrator role however also by using the cloud self service portals where users can create (and destroy) their own virtual servers. Before you can do so you however have to ensure that your Oracle VM Manager is connected to Oracle Enterprise Manager and that Oracle VM itself is configured.

The initial steps to configure Oracle VM to be able to run virtual machines are outlined below and are commonly only needed once.

As you can observer quite a number of steps are needed before you will be able to create your first virtual machine. Not included in this diagram are the efforts needed to setup Oracle Enterprise Manager and combine it with Oracle VM Manager and on how to activate the self service portals that can be used by users to create a virtual machine without the need for an administrator.

In general, when you create / provision a new virtual machine via Oracle tooling you will be making use of a template. A pre-defined template which can contain one or more virtual machines and which can potentially contain a full application or database. For this you can make use of the Oracle VM template builder or you can download pre-defined templates. The templates stored in your Oracle VM template repository can be used to provision a new virtual machine. Commonly used strategy is to use the right mix of things you put into your template and things you do configure and install using a first boot script which will be started the first time when a new virtual machine starts. even though you can do a lot in the first boot script this still will require you to potentially create and maintain a large set of templates which might differ substantially per application you would like to install or per environment it will be used in.

In a more ideal scenario you will not be developing a large set of templates, in an ideal scenario you will only maintain one (or a very limited set of) templates and use other external tooling to ensure the correct actions are taken. Since recent Oracle has changed some of the policies and the Oracle Linux template for Oracle VM which you can download from the Oracle website is nothing more then a bare minimum installation where almost every package you might take for granted it missing. This means that there will not be a overhead of packages and services started that you do not want or need. the system will be fully your to configure. This configuration can be done by using first boot scripting which you would need to build and customize for a large part yourself or you can use external tooling for this.

A good solution for this is to make use of puppet. This would mean that the first boot script only need to be able to install the puppet agent on the newly created virtual machine. By making use of node classification the puppet master will be able to see what the intended use of this new machine is and install packages and configure the machine accordingly.

Even though this is not a part of the Oracle best practices it is a solution for companies who do have a large set of different type of virtual machines they need to be able to provision automatically or semi automatically. By implementing puppet you will be able to keep the number of Oracle VM templates to a minimum and keep the maintenance on the template extremely limited. All changes to a certain type of virtual machine provisioning can be done by changing puppet scripts. An additional benefit is that this is seen as a non-intrusive customization to the Oracle VM way of working. This way you can stay true to the Oracle best practices and add the puppet best practices.

As a warning, on the puppet website a number of scripts for Oracle databases and other Oracle software are available. Even though they do tend to work it is advised to be extremely contentious about using them and you should be aware that this might be harming your application and database software installation. it will be good to look at the inner workings of them before applying them in your production cloud. However, when tested and approved to be working for your needs they might be helping you to speed up deployments.

Saturday, June 07, 2014

Oracle Cloud Periodic Table

Cloud computing and cloud in general is a well discussed topic which defines a new era of computing and how we think about computing and how this can be done in the new era. Defining the cloud is a hard thing and very much depend on your point of view. As many vendors have tried to describe what cloud computing is you might find that they all have a different explanation due to their point of view. This makes creating a single description of cloud computing hard. When you are looking for the pure definition of cloud computing the best source to turn to is NIST (National Institute of Standard and Technology) who have been giving a definition of cloud computing which might be one of the best ways of stating it.

The NIST definition lists five essential characteristics of cloud computing: on-demand self-service, broad network access, resource pooling, rapid elasticity or expansion, and measured service. It also lists three "service models" (software, platform and infrastructure), and four "deployment models" (private, community, public and hybrid) that together categorize ways to deliver cloud services. The definition is intended to serve as a means for broad comparisons of cloud services and deployment strategies, and to provide a baseline for discussion from what is cloud computing to how to best use cloud computing.

To help customers understand cloud and cloud computing better and to show that cloud computing is not a single solution however constist out of many solutions which can be combined to form other solutions Oracle has released a short video to create a mindset which uses the analogy with the Periodic Table of Elements which is called the Oracle Cloud Periodic Table.

The NIST definition lists five essential characteristics of cloud computing: on-demand self-service, broad network access, resource pooling, rapid elasticity or expansion, and measured service. It also lists three "service models" (software, platform and infrastructure), and four "deployment models" (private, community, public and hybrid) that together categorize ways to deliver cloud services. The definition is intended to serve as a means for broad comparisons of cloud services and deployment strategies, and to provide a baseline for discussion from what is cloud computing to how to best use cloud computing.

To help customers understand cloud and cloud computing better and to show that cloud computing is not a single solution however constist out of many solutions which can be combined to form other solutions Oracle has released a short video to create a mindset which uses the analogy with the Periodic Table of Elements which is called the Oracle Cloud Periodic Table.

This video shows the vision of Oracle on cloud computing, or at least a part of the vision and creates a mindset to understand that your specific cloud solution will most likely be the combination of a number of modules which are offered from within a cloud platform. Not only Oracle is making use of this model, it is a growing trend in hybrid clouds and is largely based upon open standards and the as-a-Service way of thinking.

Wednesday, June 04, 2014

Define new blackout reasons in Oracle Enterprise Manager 12c

Oracle Enterprise Manager is positioned by Oracle as the standard solution for monitoring and managing Oracle and non-Oracle hardware and software. Oracle Enterprise manager is quickly becoming the standard tooling for many organisations who do have operate an Oracle implementation inside their corporate IT landscape.

Oracle Enterprise Manager monitors all targets and has the ability to alert administrators when an issue occurs. For example when a host or a database is down an alert is created and additional notification can be triggered.

In essence this is a great solution, however in cases where you do for example maintenance you do not want Oracle Enterprise Manager to send out an alert as you intentionally bring down some components or create a situation which might trigger an alert. For this Oracle provides you the ability to create a blackout from both the graphical user interface as well as the CLI. A blackout will prevent alerts to be send out and you can do your maintenance (as an example).

One of the things you will have to provide during the creation of a blackout is the blackout reason which is a pre-set description of the blackout. This is next to a free text description. The blackout reason will provide you to report on blackouts in a more efficient manner.

Oracle provides a number of blackout reasons, however your organisation might require other blackout reasons specific to your situation. When using the CLI you can query all the blackout reasons and you can also create new blackout reasons.

To get an overview of all blackout reasons that have been defined in your Oracle Enterprise Manager instance you can execute the following command to get an overview:

emcli get_blackout_reasons

In case the default set of blackout reasons are not providing you the blackout reason you do require you can create your own custom blackout reason. When using the CLI you can execute the following command:

emcli add_blackout_reason -name=""

As an example, if you need a reason which is named "DOWNTIME monthly cold backup" you should execute the following command which will ensure that next time you execute get_blackout_reasons you should see this new reason in the list. It also ensures it is directly available in the GUI version when creating a blackout.

emcli add_blackout_reason -name="DOWNTIME monthly cold backup"

Oracle Enterprise Manager monitors all targets and has the ability to alert administrators when an issue occurs. For example when a host or a database is down an alert is created and additional notification can be triggered.

In essence this is a great solution, however in cases where you do for example maintenance you do not want Oracle Enterprise Manager to send out an alert as you intentionally bring down some components or create a situation which might trigger an alert. For this Oracle provides you the ability to create a blackout from both the graphical user interface as well as the CLI. A blackout will prevent alerts to be send out and you can do your maintenance (as an example).

One of the things you will have to provide during the creation of a blackout is the blackout reason which is a pre-set description of the blackout. This is next to a free text description. The blackout reason will provide you to report on blackouts in a more efficient manner.

Oracle provides a number of blackout reasons, however your organisation might require other blackout reasons specific to your situation. When using the CLI you can query all the blackout reasons and you can also create new blackout reasons.

To get an overview of all blackout reasons that have been defined in your Oracle Enterprise Manager instance you can execute the following command to get an overview:

emcli get_blackout_reasons

In case the default set of blackout reasons are not providing you the blackout reason you do require you can create your own custom blackout reason. When using the CLI you can execute the following command:

emcli add_blackout_reason -name="

As an example, if you need a reason which is named "DOWNTIME monthly cold backup" you should execute the following command which will ensure that next time you execute get_blackout_reasons you should see this new reason in the list. It also ensures it is directly available in the GUI version when creating a blackout.

emcli add_blackout_reason -name="DOWNTIME monthly cold backup"

Tuesday, June 03, 2014

Resolving missing YAML issue in Perl CPAN

When installing modules as part of your Perl ecosystem or when updating modules inside your Perl ecosystem you do most likely use CPAN or CPANM. CPAN stands for Comprehensive Perl Archive Network and is the default location where a large set of additional modules for the Perl programming language do reside and which you can download. CPAN provides an interactive CLI interface which you can use to install new modules. In essence CPAN is much more then just a tool and a download location, CPAN is a thriving ecosystem where people add new software and modules to the extending CPAN archive on a daily basis. The below image shows how the CPAN ecosystem looks like from a high level perspective;

When you use the CPAN CLI out of the box you might be hit with a number of warnings around YAML missing. YAML stands for, YAML Ain't Markup Language, and is a human friendly data serialization standard for all programming languages with implementatins for C/C++, Ruby, Python, Java, Perl, C#, .NET, PHP, OCaml, JavaScript, ActionScript, Haskell and a number of others.

The warning messages you get when using CPAN might look like the ones below;

"YAML' not installed, falling back to Data::Dumper and Storable to read prefs '/root/.cpan/prefs"

"Warning (usually harmless): 'YAML' not installed, will not store persistent state"

The way to resolve this is very simple, first ensure you have the YAML module installed. If this is the case ensure you inform CPAN that you would like to use YAML, this can be done by excuting the 2 commands below and this should resolve the issue of the repeating warnings when using CPAN.

o conf yaml_module YAML

o conf commit

When you use the CPAN CLI out of the box you might be hit with a number of warnings around YAML missing. YAML stands for, YAML Ain't Markup Language, and is a human friendly data serialization standard for all programming languages with implementatins for C/C++, Ruby, Python, Java, Perl, C#, .NET, PHP, OCaml, JavaScript, ActionScript, Haskell and a number of others.

The warning messages you get when using CPAN might look like the ones below;

"YAML' not installed, falling back to Data::Dumper and Storable to read prefs '/root/.cpan/prefs"

"Warning (usually harmless): 'YAML' not installed, will not store persistent state"

The way to resolve this is very simple, first ensure you have the YAML module installed. If this is the case ensure you inform CPAN that you would like to use YAML, this can be done by excuting the 2 commands below and this should resolve the issue of the repeating warnings when using CPAN.

o conf yaml_module YAML

o conf commit

Exalogic and Oracle Ops Center

Oracle Exalogic, as part of the Oracle Engineered systems portfolio, can be completely managed by making use of Oracle Enterprise Manager. It is within the strategy of Oracle to ensure all products can be tied into Oracle Enterprise Manager as the central maintenance and monitor solution for the enterprise. Traditionally Oracle Enterprise Manager finds its roots in a software monitor and management tool and is traditionally not used for hardware monitoring and maintenance. Oracle OPS center, originally from Sun, is build for this tasks.

Oracle has integrated the two solutions, Oracle Enterprise Manager and Oracle OPS center to form a single view. Even though under the hood it are still two different products you see that the integration is getting more and more mature and that the two products startt to act as one where the OEM12C core is used for software tasks and the OPS core is used for hardware tasks. The below image shows the split of the two products.