Customer experience is becoming more and more important both in B2B as well as in B2C. When a customer is having a bad experience with your brand and/or the service you are providing the changes that this customer will purchase something or will be a returning customer in the future is becoming very unlikely. Additionally, if the customer is having a good customer experience however is lacking the emotional binding with your brand or product the changes that he will become a brand advocate is becoming unlikely.

The challenge companies are facing is that it becomes more and more important to ensure the entire customer experience and the customer journey is perfect and at the same time an emotional binding is created. As a large part of the customer journey is being moved to the digital world a good digital customer journey is becoming vital for ensuring success for your brand.

As more and more companies invest heavily in the digital customer journey it is, by far, not enough to ensure you have a good working and attractive looking online shopping experience. To succeed one will have to go a step further than the competition and ensure that even a negative experience can be turned into a positive experience.

The negative experience

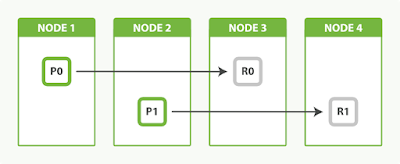

The below diagram from koobr.com showcases a customer journey which has a negative experience in it. Is shows that the customer wanted to purchase an item and found that the item was not in stock.

This provides two challenges; the first challenge is how to ensure the customer will not purchase the required items somewhere else and the second challenge is how to ensure we turn a negative experience into a positive experience.

Turning things positive

In the example for koobr.com a number of actions are taken on the item not in stock issue.

This all takes the assumption that we know who the customer is or that we can get the user to reveal who he is. In case we do not know the customer, we can display a message stating that the customer can register for an alert when the items is in stock and as soon as the item is in stock a discount will be given. The promise for a discount on the item in the future also helps to make sure the customer will not purchase somewhere else.

Making a connection

The way you can contact the customer when the item is back in stock depends on the fact if we know who the customer is and which contact details we have from this customer. If we assume that we know who this customer is we can provide a discount specific for this customer only or provide another benefit.

The default way of connecting with a customer in a one on one manner is sending out an email to the mail address we have registered for this customer. A lot of other methods are however available and depending on the geographical location and demographic parameters better options can be selected.

As an example;

Only depending on email and a generic tweet on twitter will provide some conversion however much less conversion than might be achieved when taking into account more demographic parameters and multiple channels.

Keep learning

One of the most important parts of a strategy as outlined above is that you ensure your company keeps learning and ensures that every action as well as the resulting reaction are captured. In this case, no reaction is also an action. Combining constant monitoring of every action and reaction and a growing profile of your individual customer as well as the entire customer base provides the dataset upon which you can define the best action to counteract a negative experience as well as ensuring a growing emotional bonding between your customer and your brand.

Integrate everything

When building a strategy like this it needs to be supported by a technology stack. The biggest mistake a company can make is building a solution for this strategy in isolation and have a new data silo. Customers are not interested in which department handles which part of the customer journey, the outside view is that of the company as one entity and not as a collection of departments.

Ensuring that your marketing department, sales department, aftercare department, web-care department and even your logistical department and financial department make use of a single set of data and add new information to this dataset is crucial.

To ensure this the strategy needs to make use of an integrated solution, an example of such an integrated solution is the Oracle Cloud stack where for example the Oracle Customer experience social cloud solution is fully integrated with Oracle marketing, services, sales and commerce.

Even though this might be the ideal situation and provides a very good solution for a greenfield implementation a lot of companies will not start in a greenfield, they will adopt a strategy like this in an already existing ecosystem of different applications and data stores.

This means that breaking down data silos within your existing ecosystem and ensuring that they provide a unified view of your customer and all actions directly and indirectly related to the customer experience is vital.

In conclusion

Creating a good customer experience for your customers and building an emotional relationship between customer and brand is vital. Nurturing this is very much depending on the demographical parameters for each individual customer and a good customer experience as well as building a relationship requires having all data available and capturing every event.

Adopting a winning strategy will involve more than selecting a tool, it will require identifying all available data, all data that can potentially be captured and ensuring it is generally available to select the best possible action.

Implementing a full end to end strategy will be a company wide effort and will involve all business departments as well as the IT department.

The challenge companies are facing is that it becomes more and more important to ensure the entire customer experience and the customer journey is perfect and at the same time an emotional binding is created. As a large part of the customer journey is being moved to the digital world a good digital customer journey is becoming vital for ensuring success for your brand.

As more and more companies invest heavily in the digital customer journey it is, by far, not enough to ensure you have a good working and attractive looking online shopping experience. To succeed one will have to go a step further than the competition and ensure that even a negative experience can be turned into a positive experience.

The negative experience

The below diagram from koobr.com showcases a customer journey which has a negative experience in it. Is shows that the customer wanted to purchase an item and found that the item was not in stock.

This provides two challenges; the first challenge is how to ensure the customer will not purchase the required items somewhere else and the second challenge is how to ensure we turn a negative experience into a positive experience.

Turning things positive

In the example for koobr.com a number of actions are taken on the item not in stock issue.

- Company sends offer for their website

- Company emails when item is in stock

- Company tweets when item is in stock

This all takes the assumption that we know who the customer is or that we can get the user to reveal who he is. In case we do not know the customer, we can display a message stating that the customer can register for an alert when the items is in stock and as soon as the item is in stock a discount will be given. The promise for a discount on the item in the future also helps to make sure the customer will not purchase somewhere else.

Making a connection

The way you can contact the customer when the item is back in stock depends on the fact if we know who the customer is and which contact details we have from this customer. If we assume that we know who this customer is we can provide a discount specific for this customer only or provide another benefit.

The default way of connecting with a customer in a one on one manner is sending out an email to the mail address we have registered for this customer. A lot of other methods are however available and depending on the geographical location and demographic parameters better options can be selected.

As an example;

- A teenage girl might be more triggered if we send her a private message via Facebook messenger.

- A young adult male in Europe might be more triggered if we send a private message via WhatsApp.

- A young adult female in Asia might be more triggered if we use WeChat

- A Canadian male might want to receive an email as a trigger to be informed about an item that is back in stock

- A senior citizen might be more attracted if a phone call is made to inform him that the item is back in stock.

Only depending on email and a generic tweet on twitter will provide some conversion however much less conversion than might be achieved when taking into account more demographic parameters and multiple channels.

Keep learning

One of the most important parts of a strategy as outlined above is that you ensure your company keeps learning and ensures that every action as well as the resulting reaction are captured. In this case, no reaction is also an action. Combining constant monitoring of every action and reaction and a growing profile of your individual customer as well as the entire customer base provides the dataset upon which you can define the best action to counteract a negative experience as well as ensuring a growing emotional bonding between your customer and your brand.

Integrate everything

When building a strategy like this it needs to be supported by a technology stack. The biggest mistake a company can make is building a solution for this strategy in isolation and have a new data silo. Customers are not interested in which department handles which part of the customer journey, the outside view is that of the company as one entity and not as a collection of departments.

Ensuring that your marketing department, sales department, aftercare department, web-care department and even your logistical department and financial department make use of a single set of data and add new information to this dataset is crucial.

To ensure this the strategy needs to make use of an integrated solution, an example of such an integrated solution is the Oracle Cloud stack where for example the Oracle Customer experience social cloud solution is fully integrated with Oracle marketing, services, sales and commerce.

Even though this might be the ideal situation and provides a very good solution for a greenfield implementation a lot of companies will not start in a greenfield, they will adopt a strategy like this in an already existing ecosystem of different applications and data stores.

This means that breaking down data silos within your existing ecosystem and ensuring that they provide a unified view of your customer and all actions directly and indirectly related to the customer experience is vital.

In conclusion

Creating a good customer experience for your customers and building an emotional relationship between customer and brand is vital. Nurturing this is very much depending on the demographical parameters for each individual customer and a good customer experience as well as building a relationship requires having all data available and capturing every event.

Adopting a winning strategy will involve more than selecting a tool, it will require identifying all available data, all data that can potentially be captured and ensuring it is generally available to select the best possible action.

Implementing a full end to end strategy will be a company wide effort and will involve all business departments as well as the IT department.